Was stuck on the 5th blog, so jump back to Dr. G.’ approach of understanding Tensor. There are important tensor notation and several concepts: Jacobean matrix, Jacobean, covariant, covariant basis, contracovariant, arc length, gradient and metric tensor.

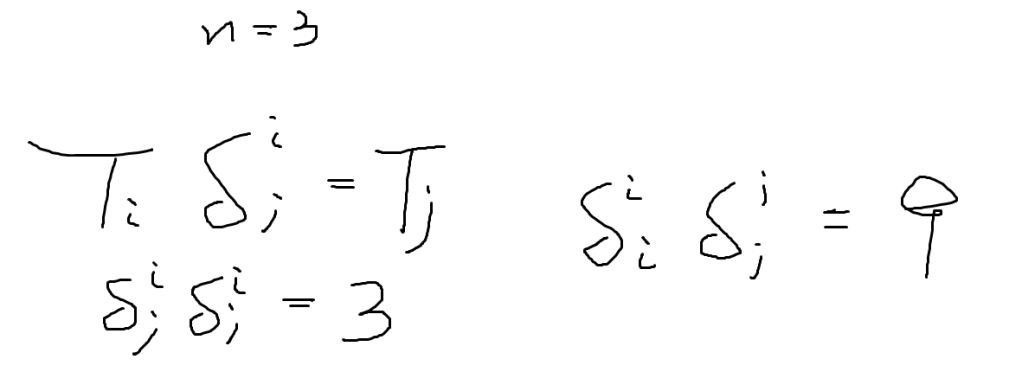

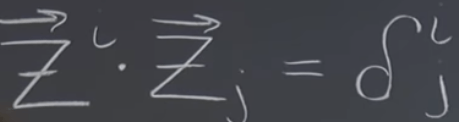

Tensor notation practice:

Be noted to differentiate with Matrix product in linear algebra: AB and BA both are identity.

Tensor doesn’t care for direction, while matrix does. Think of tensors as entries. .

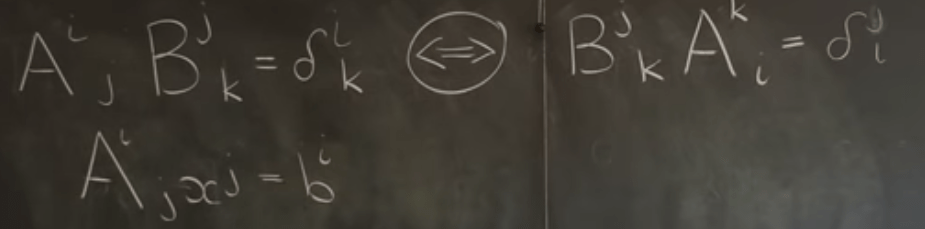

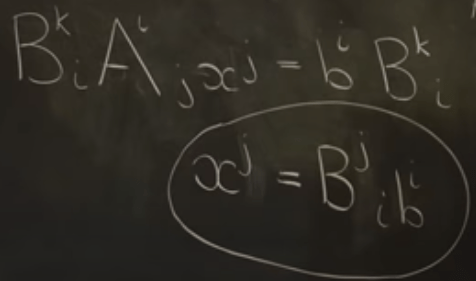

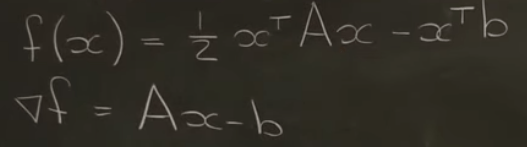

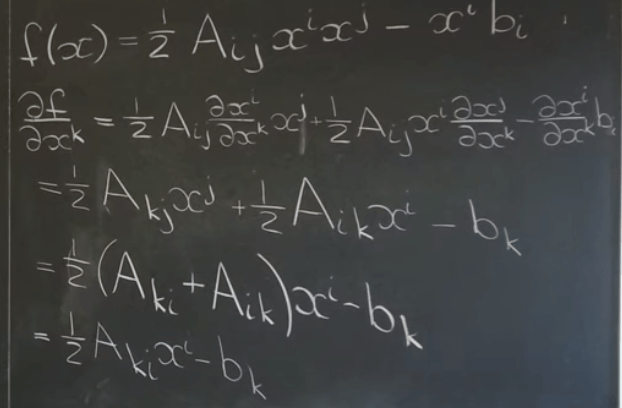

To understand the concepts, first, let’s look at the quadratic form minimization problem.

To arrive to above result,

Then look at another problem – Decomposition by Dot Product in Tensor Notation

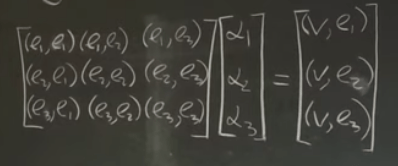

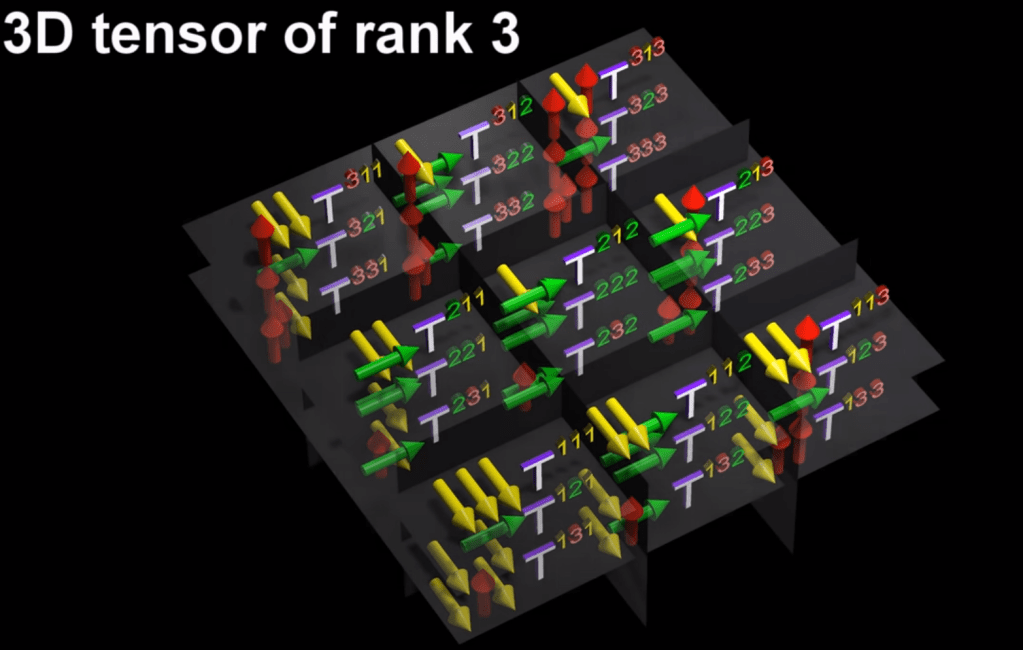

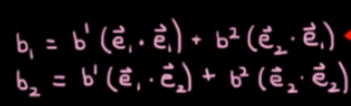

Given a coordinates(e1, e2, e3) and a vector V, to find the coefficient of scalar part of V projected on e1, e2, e3 respectively.

The solution is simply to dot product v to e coordinate (right side of the equation below, one can see apparently that the left side matrix is the metric tensor)

In orthogonal coordinates, the rest two parts will be canceled to zero.

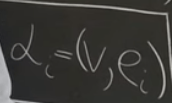

So we got the above succinct formula for the coefficient of vector V, it’s also the same approach we get the contravariant component,

Hence the quest to find the contravariant component of vector V becomes to find the contravariant of the coordinate basis and dot to it.

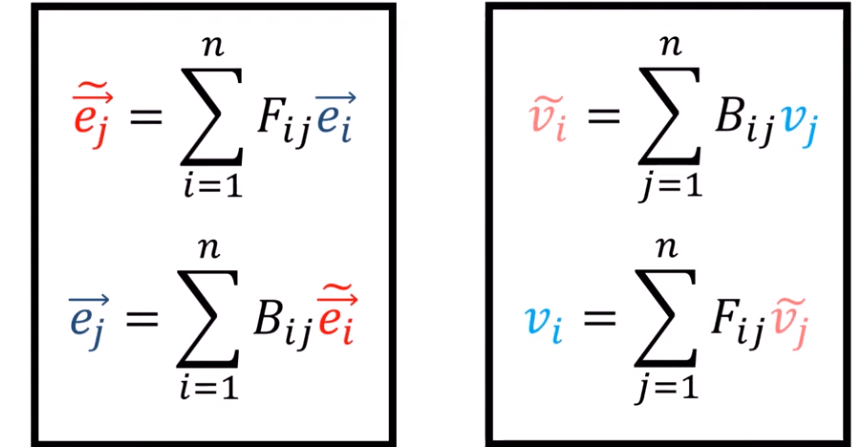

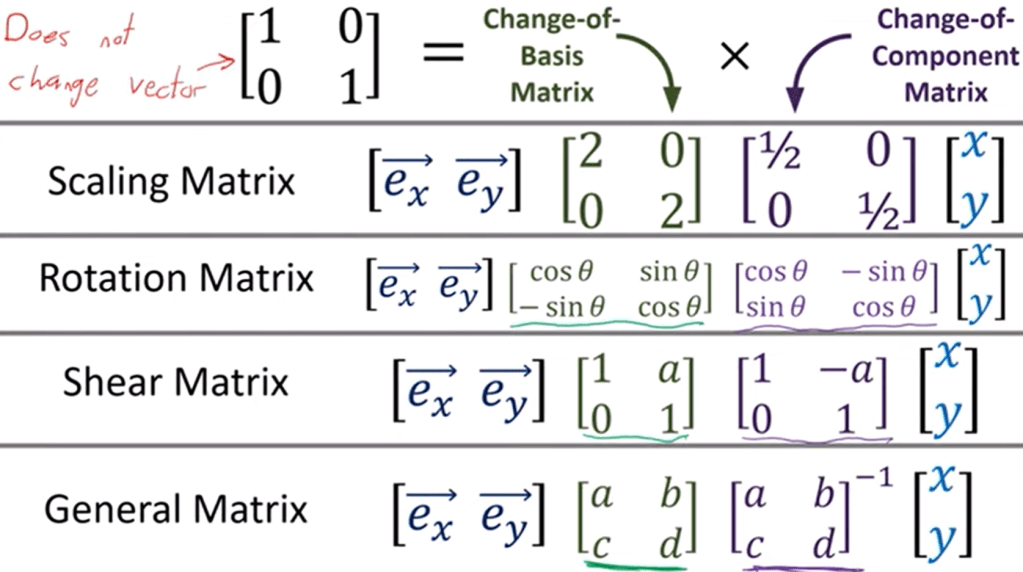

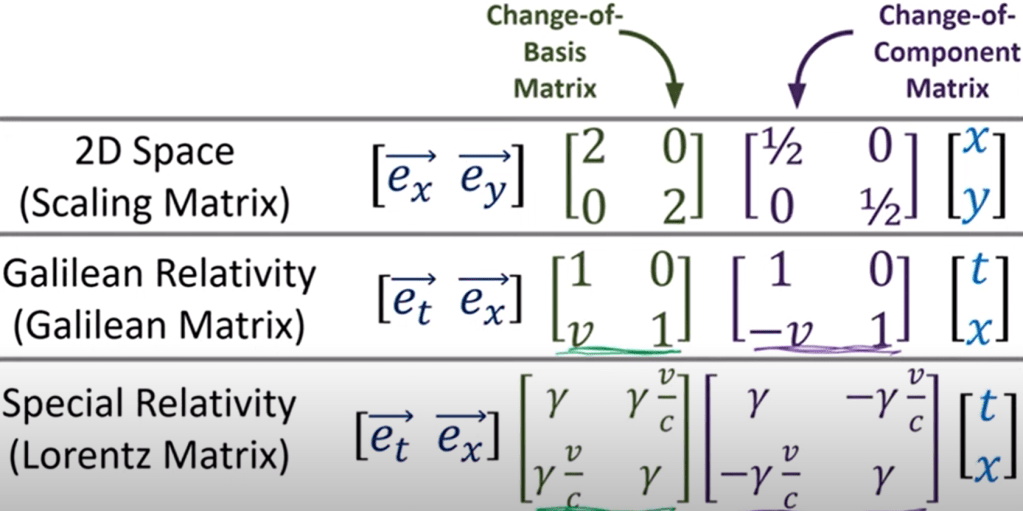

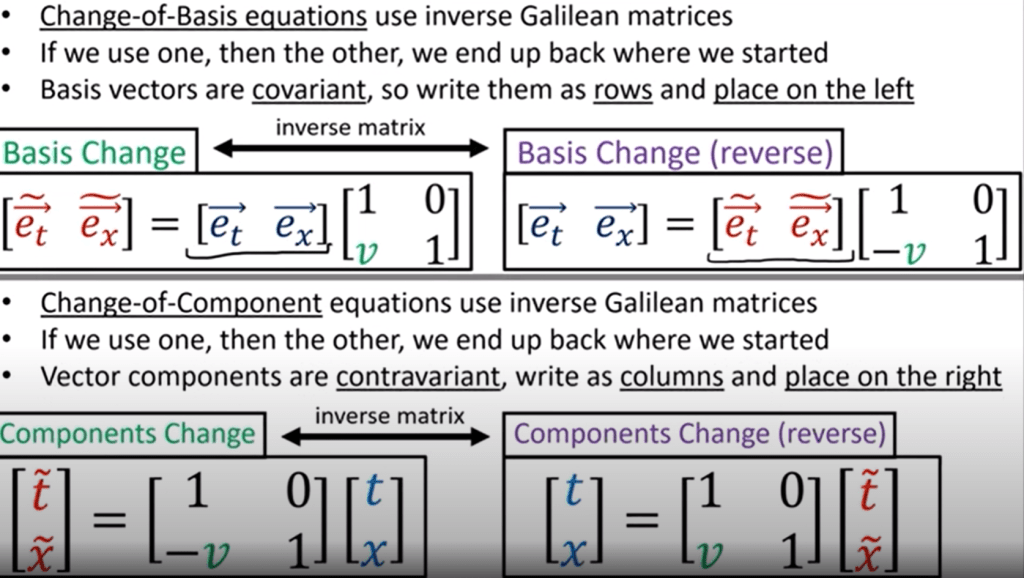

The Relationship Between the Covariant and the Contravariant Bases is what Eigenchris stated early on using F or B transformation.

Compare to this

and this

Recall in EigenChris’s conversion between old and new coordinates with covarient and contracovariant component:

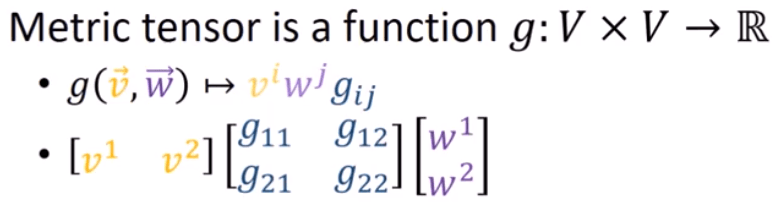

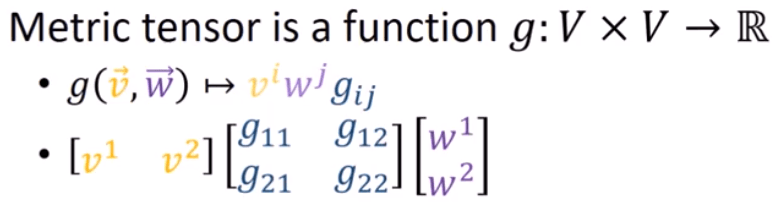

Both lecturers brought up the dot product of two vectors in this form including metric tensor: metric tensor g takes in two vectors and spit out a real number that is the square root of the length of itself (if the vector is same). Note the g is disappeared in orthogonal coordinate, but definitely needed in non-orthonormal system.

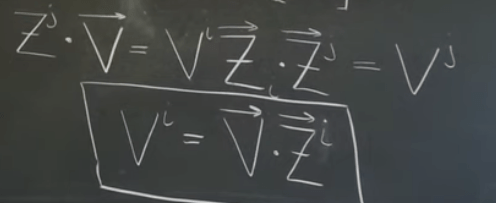

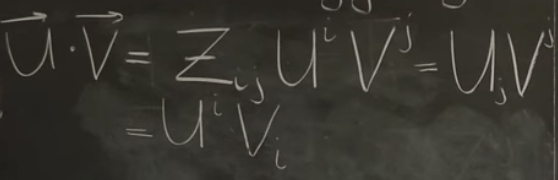

In Dr.G. index juggling session, Zij serves or equivalent to g(metric tensor), it’s beautiful that it can be written in either U covarient V component or U componet V covariant.

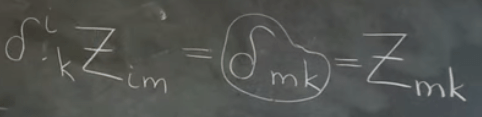

Another superb case juggling index, delta mk can be rewritten Zmk, and it’s actually just a metric tensor like g(i, j).

Stuck here…

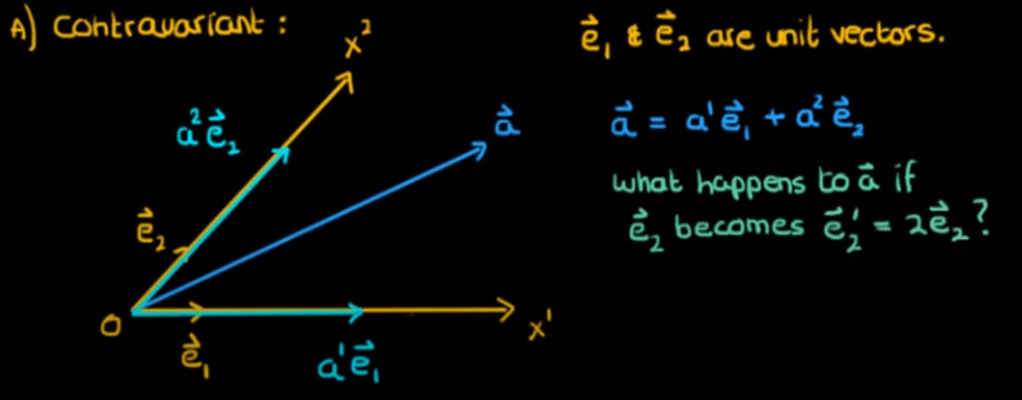

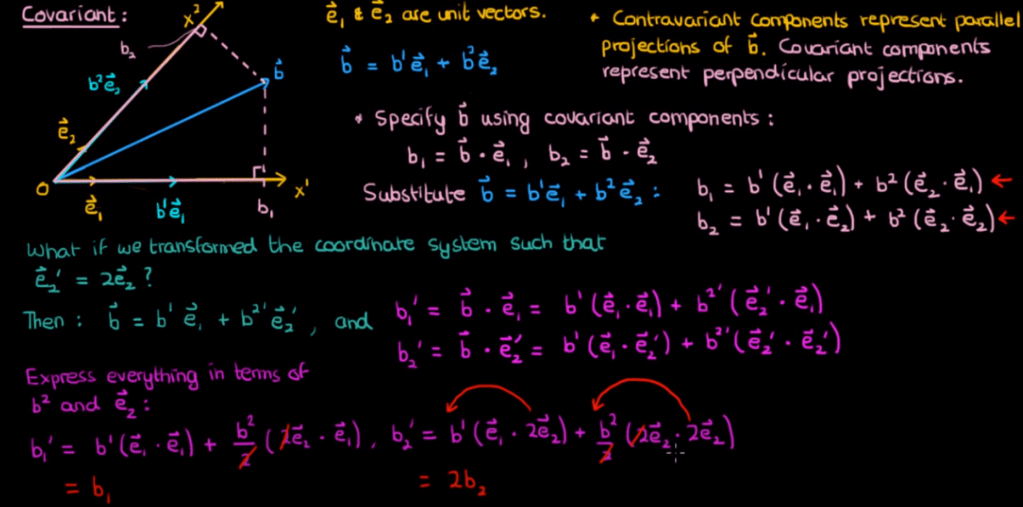

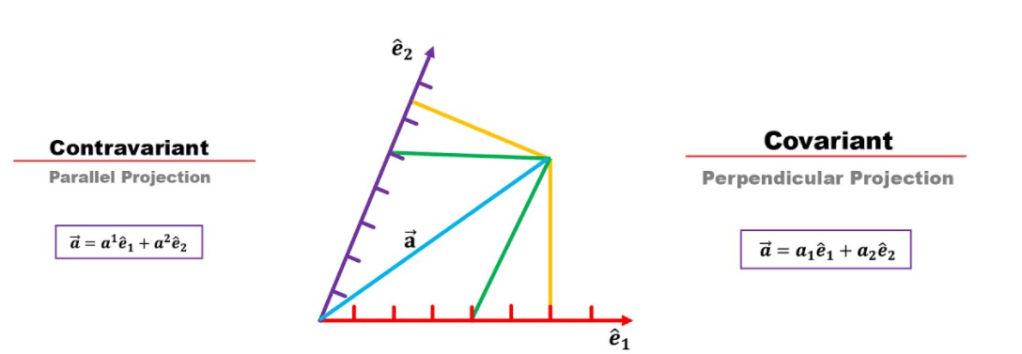

To understand the concepts of contracovarient and covariant. Referencing Faculty of Khan: covarient third letter is v, moving downward, so is subscription, while contravariant third letter is n, moving upward, so is superscription.

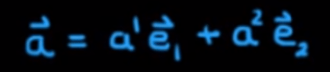

In Eugene’s physics vedio, it’s more vividly showing the Tensor, however in the explanation of covariant, it “describe the vector in terms of its dot product with each of the basis vectors.” Noting he is not wrong in the sense it is to describe the vector not to define a vector like

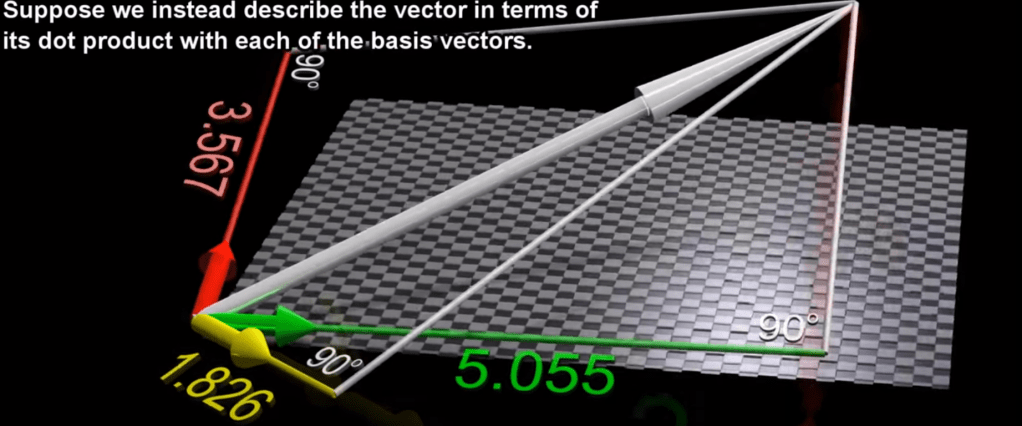

When it comes to define Tensor, it says “what makes tensor a tensor is that when the basis vectors change, the components of the tensor would change in the same manner as they would in one of these objects.”

In rank 3 tensor, there re 3^3 = 27 various combination of basis vectors:

Recall dot product of two vectors turning into a real number below, the gij is a tensor, it’s exactly as shown in above animation.

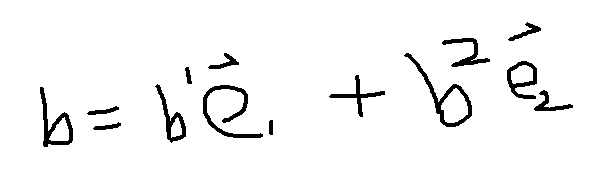

The pivotal puzzle for me now is how does the covariant component b subone b subtwo express/define the vector b?

Algebraically, solving this two equations:

We can get b1 and b2 (superscription, contravariant) from b1 and b2 (subsription, covarient), then replace back to

However, geometrically, what is the the visual? as can see from below, contravarient is the parallel projection, while covarient is the perpendicular projection. Note it doesn’t dictate that a sub equals a sup, because it’s two different logic in drawing/reaching/defining vector a here.

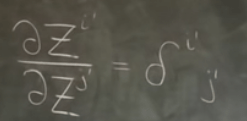

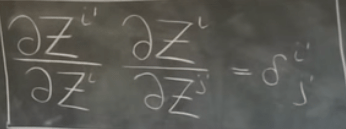

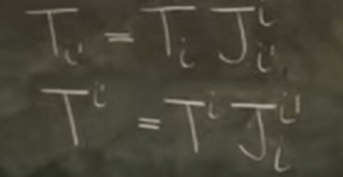

Crystal clear now!!! not yet, added on Aug 12, 2021. What is tensor, tensor transforms according to tensor’s way on basis conversion, the pattern of Jacob matrix indexing position is as the following

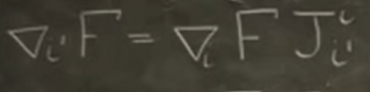

according to above pattern, we know gradient is covariant

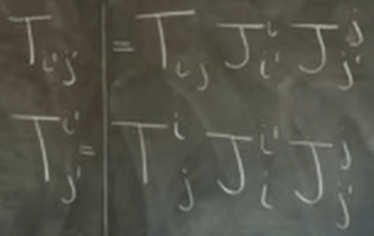

Double indexing or coordinates or basis are

Variance and Covariance enhancement by eigenchris

covarience is basic vector written in row form while contravarience is the vector components written in column form.